Panoptic Segmentation

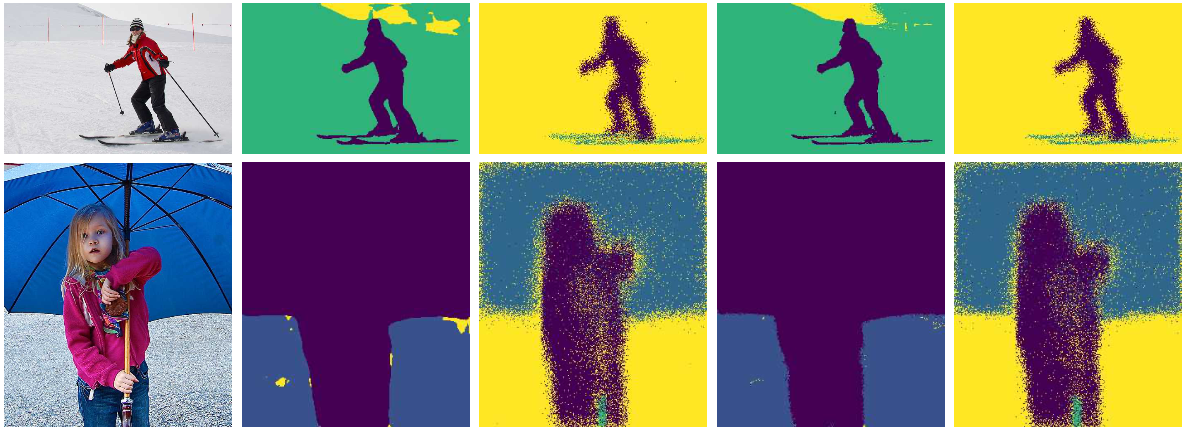

Research on panoptic segmentation using conditional random fields. Worked on development of a novel information fusion layer, for optimum combination of semantic and instance segmentation network outputs. Bipartite Conditional Random Fields (BCRF) uses an energy function containing cross-potential terms between the outputs of two parallel deep neural network heads to produce an optimal panoptic segmentaion condition on the original image. The BCRF layer uses a cross compatibility function between each pair of thing and stuff classes, which is completely learned using the data distribution. Our work was able to achieving state-of-the-art performance on selected popular datasets. The novel layer is also more interpretable and is inline with our intuition about compatibility between classes. Code for our work is hosted here. Our BMVC publication and oral presentation can be found here.